Replacing ngrok with nginx proxy manager (NPM)

Target audience: Developers who works with containerized applications on a dedicated server on a local network. This is an alternative to using ngrok/localtunnel/hosts/.. for accessing the containerized applications using meaningful names. If you need remote (off site) access to the same applications, NPM will work as well. However, this post is not about that.

These "instructions" come with the usual caveats. This works for me and my setup. Your mileage may vary, greatly, from mine.

Up until now I have been using ngrok (and I will continue to use the free version). But with ngroks recent(?) increase in price and my limited use of it I was looking for a replacement. Inn sailed Nginx Proxy Manager. A, for lack of better words, bit more involved than just paying for and starting ngrok, but way, way more fun!

In my setup, the deployment server and development laptop are both on the same network segment. This network is firewalled so I can't (nor should I) use certbot to manage certificates and/or use a remote dns (ie cloudflare). That would ofc. have been too easy.

Let me emphasize. If you can use certbot with dns challenge for cloudflare or any of the other supported services - do so! If not, read on.

I wanted to resolve wildcard dns to my deployment server.

enter dnsmasq and systemd-resolved.

On my fedora 36 laptop, this proved to be fairly [easy](1).

/etc/dnsmasq.conf contains a single line

address=/rrdigital.dev/10.100.10.63

where 10.100.10.63 is the eth0 interface of my deployment server.

/etc/systemd/resolved.conf also contains a single line

DNS=127.0.0.1

To see if this works..

$ sudo systemctl start dnsmasq.service

$ sudo systemctl restart systemd-resolved.service

$ resolvectl dns

Global: 127.0.0.1

Link 2 (enp11s0): ...

$ dig gday.rrdigital.dev

; <<>> DiG 9.16.31-RH <<>> gday.rrdigital.dev

;; global options: +cmd

;; Got answer:

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 40760

;; flags: qr rd ra; QUERY: 1, ANSWER: 1, AUTHORITY: 0, ADDITIONAL: 1

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 65494

;; QUESTION SECTION:

;gday.rrdigital.dev. IN A

;; ANSWER SECTION:

gday.rrdigital.dev. 0 IN A 10.100.10.63

.

.

.

So, no matter what you put in front of rrdigital.dev it will resolve to 10.100.10.63. Excellent!

If you don't already have a local signing authority and you are uncertain on how to create the authority and necessary certs, download the two scripts linked here https://github.com/BenMorel/dev-certificates (heck, download them anyways. Much easier than having to google the commands).

$ sh create-ca.sh

$ ls

ca.crt ca.key

$ sh create-certificate.sh "*.rrdigital.dev"

$ ls

ca.crt ca.key *.rrdigital.dev.crt *.rrdigital.dev.key

$ mv "*.rrdigital.dev.crt" rrdigital.dev.crt

$ mv "*.rrdigital.dev.key" rrdigital.dev.key

.. and for good measure

$ cat ca.key > ca.pem

$ cat ca.crt >> ca.pem

$ ls

ca.crt ca.key ca.pem rrdigital.dev.crt rrdigital.dev.key

ca.crt (and ca.key) is your signing authority. Add ca.crt to your browser and/or local ca store. Remember to run update-ca-trust.

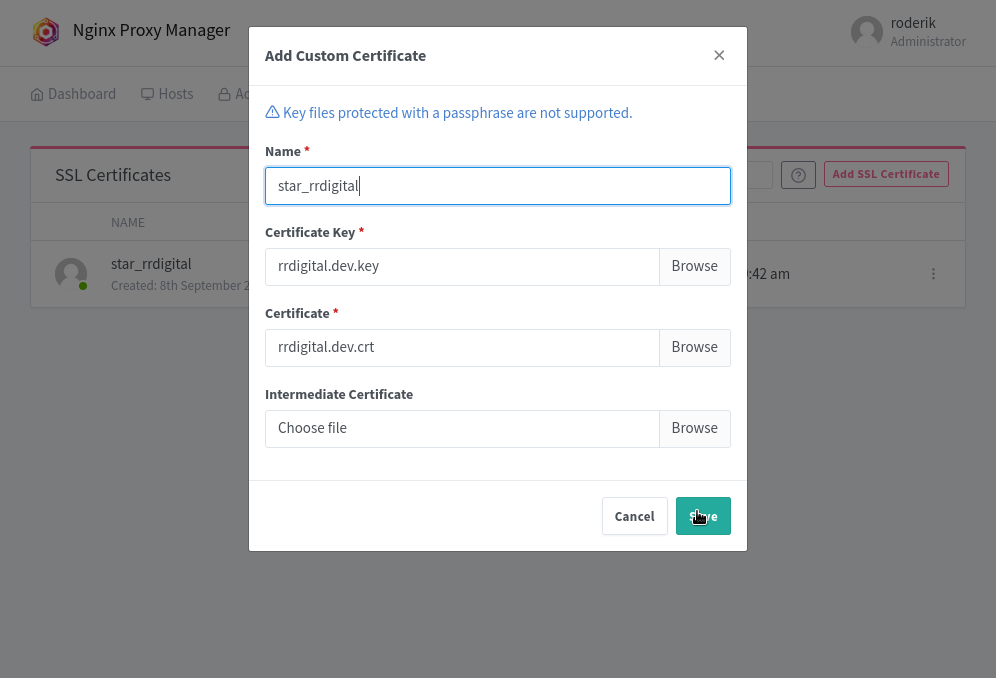

rrdigtal.dev.key/crt is your nginx certificates and ca.pem is for the off chance you are going to use python certifi.

We are now done with the laptop, let's jump over to the development server.

My server is running Arch (don't ask) and I had some challenges with the dnsmasq and systemd-resolved setup.

in /etc/dnsmasq.conf i had to add

no-resolv

server=/localhost/127.0.0.1

address=∕rrdigital.dev/10.100.10.63

in /etc/systemd/resolved.conf I add to add

DNS=127.0.0.1

DNSStubListner=no

and finally in /etc/resolv.conf i added

nameserver 127.0.0.1

at the top of the file. I also did

$ chattr +i /etc/resolv.conf

to make sure no process can change the file.

In total, this gives me the same functionality as on my fedora laptop. I don't know why, since no-resolv should render /etc/resolv.conf unusable .. but hey, I'm not one to be looking a gift horse in the mouth.

Onwards!

Running the Nginx Management Proxy docker image.

# docker-compose.yml

version: "3"

services:

app:

image: 'jc21/nginx-proxy-manager:latest'

restart: unless-stopped

ports:

# These ports are in format <host-port>:<container-port>

- '80:80' # Public HTTP Port

- '443:443' # Public HTTPS Port

- '81:81' # Admin Web Port

volumes:

- ./data:/data

- ./letsencrypt:/etc/letsencrypt

networks:

default:

external:

name: proxy

$ docker-compose up -d

To spare much grief, make sure each and every podman/docker container you run IS ON THE SAME FREAKING NETWORK. So add

networks:

default:

external:

name: proxy

to each and every one of your docker-compose.files This will allow you to address the containers by name in Nginx proxy manager.

With 65k addresses in the allotted range there's little to no chance of running out of ip addresses.

Instructions for starting out with nginx proxy manager can be found here. I will only touch upon the stuff that relates to self signed certs.

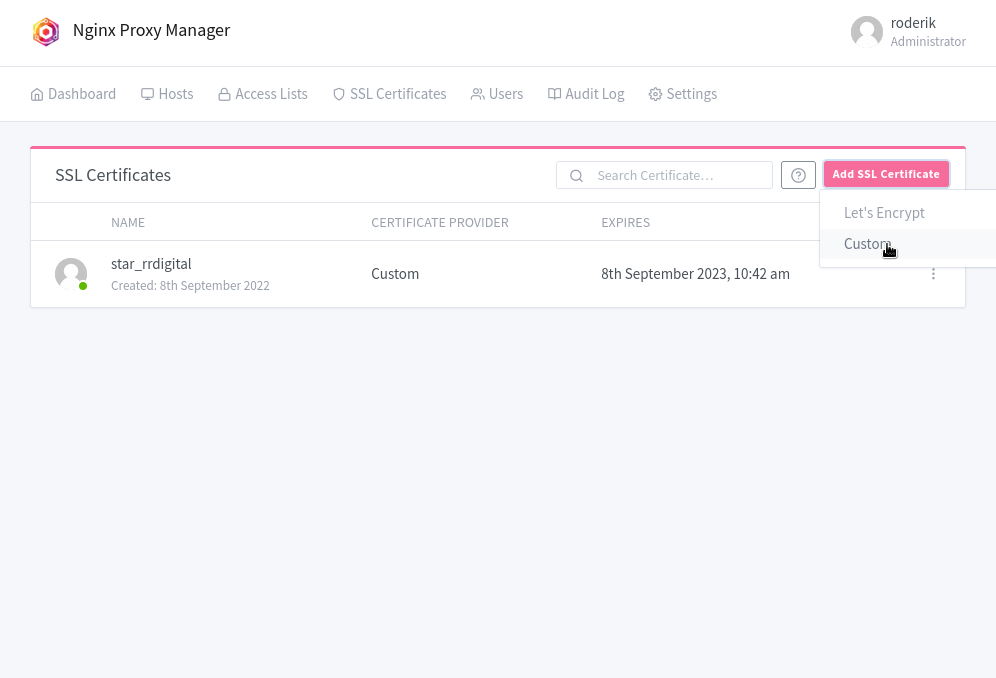

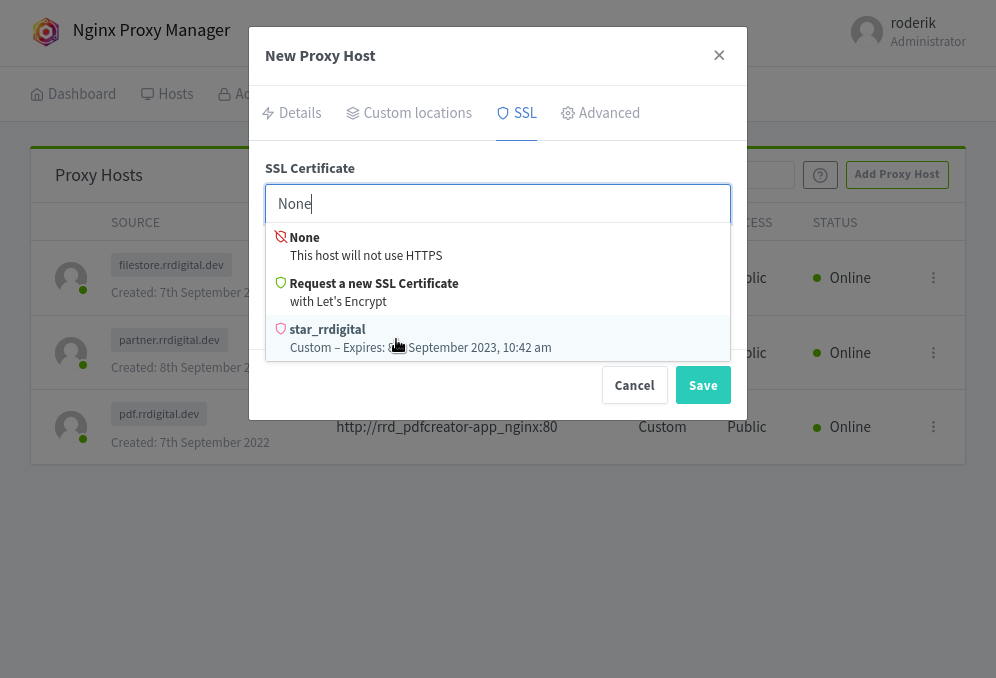

add custom certs

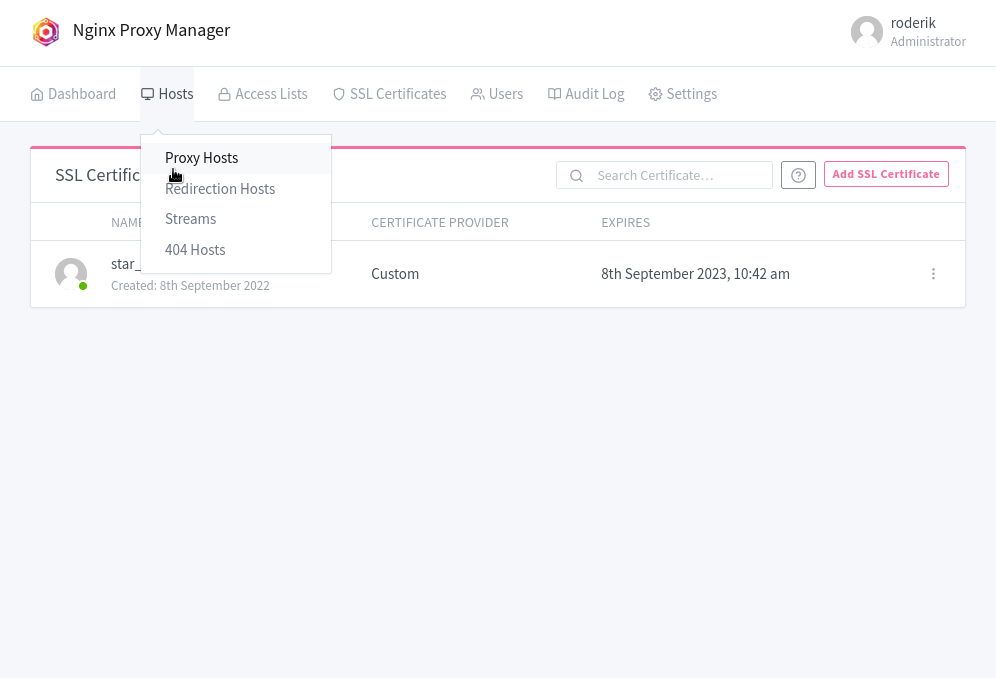

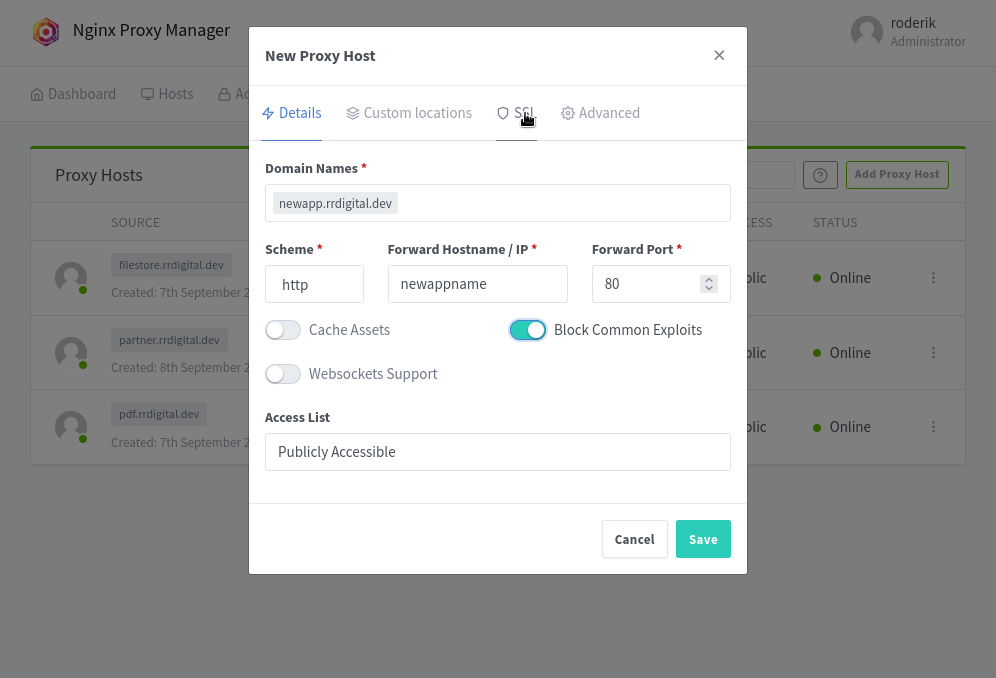

add proxy host(s)

notice how the docker-compose container name should be used

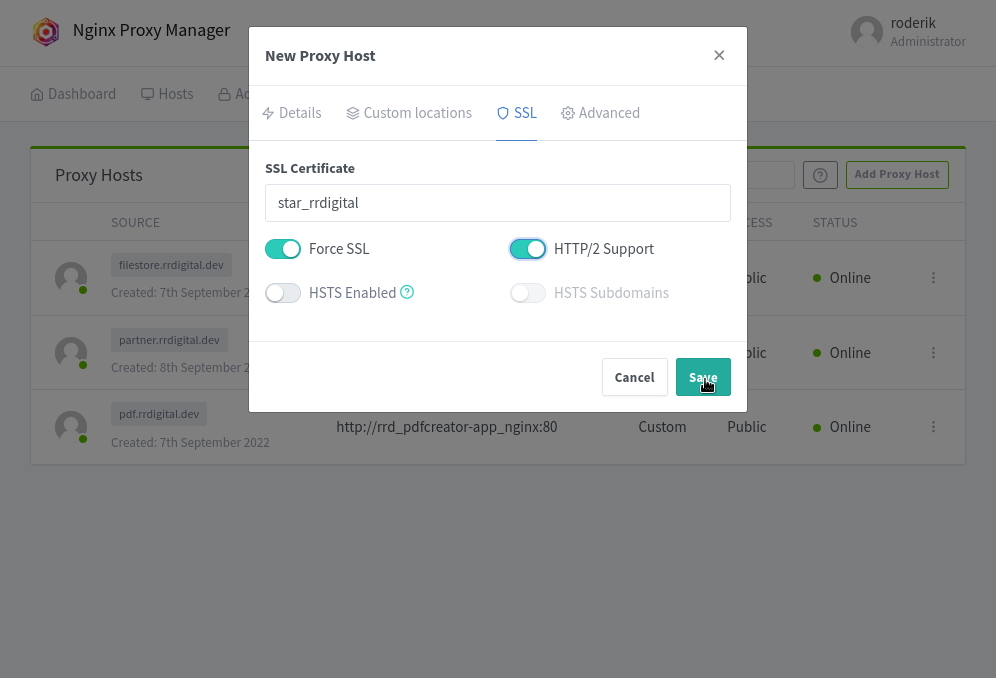

add your ssl

make sure HSTS is not enabled (you are on a local firewalled net, theres no need for it)

save and you're done. The first host is set up and hopefully working as advertised.

All your containers can, if so inclined, speak to each other

your localhost dns will resolve anything.rrddigital.dev (feel free to change your domain name)

NPM will proxy all requests to *.rrdigital.com

From a development standpoint, this is golden. I don't have to remember to shut down all proxy processes (ie grok / localtunel .. and friends) before logging off for the day...

This setup works with the company VPN as well, so if I need to work from home one day I can to so without any degradation.

I think that's It. I'll probably revisit this write up when I gather more experience and understanding of the setup.

1 It did take me a annoyingly long time to land on dnsmasq and systemd-resloved.. I had *diety of choice...or not, * only knows how many failed tries on this.